The first section for SC-100 study will contain:

- Identify the integration points in an architecture by using Microsoft Cybersecurity Reference Architecture (MCRA)

- Translate business goals into security requirements

- Translate security requirements into technical capabilities, including security services, security products, and security processes

- Design security for a resiliency strategy

- Integrate a hybrid or multi-tenant environment into a security strategy

- Develop a technical and governance strategy for traffic filtering and segmentation

Table of Contents

Microsoft Cybersecurity Reference Architecture (MCRA)

Microsoft has a reference architecture called MCRA. Let’s see what it is and how it helps you.

The reference architectures are primarily composed of detailed technical diagrams on Microsoft cybersecurity capabilities, zero trust user access, security operations, operational technology (OT), multi-cloud and cross-platform capabilities, attack chain coverage, azure native security controls, and security organizational functions.

As we can see there is different levels in the security guidance.

And it’s divided in to Security operations / SaaS / Endpoints / Hybrid / Information protection / IOT and People security

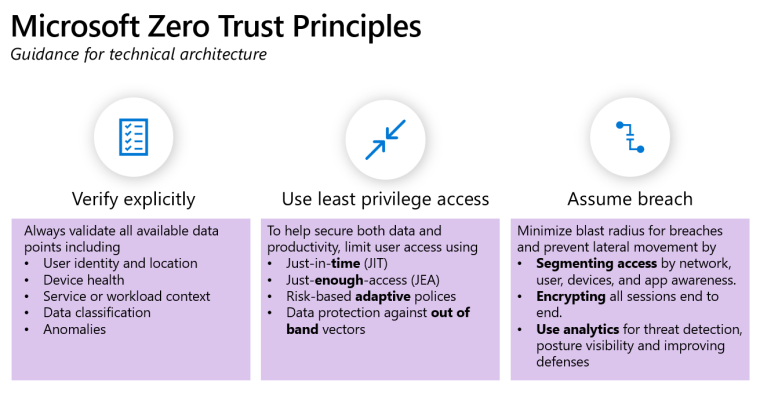

The principles of Zero Trust

Zero Trust Rapid Modernization Plan (RaMP)

Microsoft Zero Trust capabilities

A holistic approach to Zero Trust should extend to your entire digital estate – inclusive of identities, endpoints, network, data, apps, and infrastructure. Zero Trust architecture serves as a comprehensive end-to-end strategy and requires integration across the elements.

Zero Trust deployment objectives

Identity Zero Trust deployment objectives

I. Cloud identity federates with on-premises identity systems.

II. Conditional Access policies gate access and provide remediation activities.

III. Analytics improve visibility.

IV. Identities and access privileges are managed with identity governance.

Endpoint Zero Trust deployment objectives

I. Endpoints are registered with cloud identity providers. In order to monitor security and risk across multiple endpoints used by any one person, you need visibility in all devices and access points that may be accessing your resources.

II. Access is only granted to cloud-managed and compliant endpoints and apps. Set compliance rules to ensure that devices meet minimum security requirements before access is granted. Also, set remediation rules for noncompliant devices so that people know how to resolve the issue.

III. Data loss prevention (DLP) policies are enforced for corporate devices and BYOD. Control what the user can do with the data after they have access. For instance, restrict file saving to untrusted locations (such as local disk), or restrict copy-and-paste sharing with a consumer communication app or chat app to protect data.

IV. Endpoint threat detection is used to monitor device risk. Use a single pane of glass to manage all endpoints in a consistent way, and use a SIEM to route endpoint logs and transactions such that you get fewer, but actionable, alerts.

V. Access control is gated on endpoint risk for both corporate devices and BYOD. Integrate data from Microsoft Defender for Endpoint, or other Mobile Threat Defense (MTD) vendors, as an information source for device compliance policies and device Conditional Access rules. The device risk will then directly influence what resources will be accessible by the user of that device.

Applications Zero Trust deployment objectives

I. Gain visibility into the activities and data in your applications by connecting them via APIs.

II. Discover and control the use of shadow IT.

III. Protect sensitive information and activities automatically by implementing policies.

IV. Deploy adaptive access and session controls for all apps.

V. Strengthen protection against cyber threats and rogue apps.

VI. Assess the security posture of your cloud environments

Data Zero Trust deployment objectives

I. Access decisions are governed by encryption.

II. Data is automatically classified and labeled.

III. Classification is augmented by smart machine learning models.

IV. Access decisions are governed by a cloud security policy engine.

V. Prevent data leakage through DLP policies based on a sensitivity label and content inspection.

Infrastructure Zero Trust deployment objectives

I. Workloads are monitored and alerted to abnormal behavior.

II. Every workload is assigned an app identity—and configured and deployed consistently.

III. Human access to resources requires Just-In-Time.

I. Workloads are monitored and alerted to abnormal behavior.

II. Every workload is assigned an app identity—and configured and deployed consistently.

III. Human access to resources requires Just-In-Time.

Network Zero Trust deployment objectives

I. Network segmentation: Many ingress/egress cloud micro-perimeters with some micro-segmentation.

II. Threat protection: Cloud native filtering and protection for known threats.

III. Encryption: User-to-app internal traffic is encrypted.

VI. Encryption: All traffic is encrypted.

Visibility, automation, and orchestration Zero Trust deployment objectives

III. Enable additional protection and detection controls.

Translate business goals into security requirements

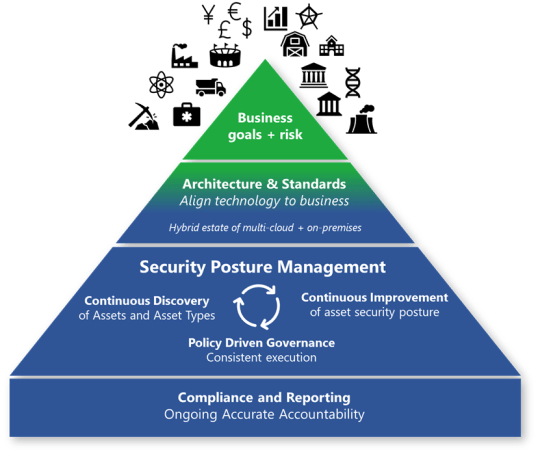

Business goals and risks

Provide the best direction for security. This direction ensures that security focuses their efforts on important matters for the organization. It also informs risk owners using familiar language and processes in the risk management framework.

Most enterprises today are a hybrid environment that spans:

- On-premises: Includes multiple generations of technology and often a significant amount of legacy software and hardware. This tech sometimes includes operational technology controlling physical systems with a potential life or safety impact.

- Clouds: Typically includes multiple providers for:

- Software as a service (SaaS) applications

- Infrastructure as a service (IaaS)

- Platform as a service (PaaS)

The key tenets of success for governance are:

- Continuous discovery of assets and asset types: A static inventory isn’t possible in a dynamic cloud environment. Your organization must focus on the continuous discovery of assets and asset types. In the cloud, new types of services are added regularly. Workload owners dynamically spin up and down instances of applications and services as needed, making inventory management a dynamic discipline. Governance teams need to continuously discover asset types and instances to keep up with this pace of change.

- Continuous improvement of asset security posture: Governance teams should focus on improving standards, and enforcement of those standards, to keep up with the cloud and attackers. Information technology (IT) organizations must react quickly to new threats and adapt accordingly. Attackers are continuously evolving their techniques, and defenses are continuously improving and might need to be enabled. You can’t always get all the security you need into the initial configuration.

- Policy-driven governance: This governance provides consistent execution by fixing something once in policy that’s automatically applied at scale across resources. This process limits any wasted time and effort on repeated manual tasks. It’s often implemented using Azure Policy or third-party policy automation frameworks.

Innovation security

Developing new capabilities and applications requires successfully meeting three different requirement types:

- Business development (

Dev): Your application must meet business and user needs, which are often rapidly evolving. - Security (

Sec): Your application must be resilient to attacks from rapidly evolving attackers and take advantage of innovations in security defenses. - IT operations (

Ops): Your application must be reliable and perform efficiently.

Waterfall and DevOps

In the waterfall model, security was traditionally a quality gate after development finishes.

DevOps expanded the traditional development model (people, process, and technology) to include operations teams. This change reduced the friction that resulted from having the development and operations teams separated. Similarly, DevSecOps expands DevOps to reduce the friction from separate or disparate security teams.

Attacker opportunities

Attackers might exploit weaknesses in:

- Development process: Attackers might find weaknesses in the application design process, for example, using weak or no encryption for communications. Or attackers might find weakness in the implementation of the design, for example, code doesn’t validate input and allows common attacks like SQL injection. Additionally, attackers might implant back doors in the code that allows them to return later to exploit in your environment or in your customer’s environment.

- IT infrastructure: Attackers can compromise endpoint and infrastructure elements that the development process is hosted on using standard attacks. Attackers might also conduct a multistage attack that uses stolen credentials or malware to access development infrastructure from other parts of the environment. Additionally, the risk of software supply chain attacks makes it critical to integrate security into your process for both:

- Protecting your organization: From malicious code and vulnerabilities in your source code supply chain

- Protecting your customers: From any security issues in your applications and systems, which might result in reputation damage, liability, or other negative business impacts on your organization

The leadership imperative: Blend the cultures

Meeting these three requirements requires merging these three cultures together to ensure that all team members value all types of requirements and work together toward common goals.

Integrating these cultures and goals together into a true DevSecOps approach can be challenging, but it’s worth the investment. Many organizations experience a high level of unhealthy friction from development, IT operations, and security teams who work independently, creating issues with:

- Slow value delivery and low agility

- Quality and performance issues

- Security issues

Leadership techniques

- No one wins all the arguments

- Expect continuous improvement, not perfection

- Celebrate both common interests and unique individual values

- Develop shared understanding

- Business urgency

- Likely risks and threats

- Availability requirements

- Demonstrate and model the desired behavior

- Monitor the level of security friction

- Healthy friction: Similar to how exercise makes a muscle stronger, integrating the right level of security friction in the DevOps process strengthens the application by forcing critical thinking at the right time. If teams are learning and using those learnings to improve security, for example, considering how why, and how an attacker might try to compromise an application, and finding and fixing important security bugs, then they are on track.

- Unhealthy friction: Look out for friction that impedes more value than it protects. This often happens when security bugs generated by tools have a high false positive rate or false alarms, or when the security effort to fix something exceeds the potential impact of an attack.

- Integrate security into budget planning

- Establish shared goals

Translate security requirements into technical capabilities, including security services, security products, and security processes

When you’re making the shift to the cloud, there are technical considerations around how it will help improve how you manage and maintain your cloud and workloads

Technical benefits

Scalability

Scalability, or the ability to scale out your resources depending on usage, utilization, and demand is one of the main technical benefits of moving to the cloud.

Availability

On-premises, it’s more costly to build highly available infrastructure. It’s less costly to architect highly available infrastructure in the cloud.

Security and compliance

When it comes to security and compliance, Microsoft is continually expanding our security infrastructure and toolsets to keep you on par with what’s transpiring with respect to security threats on global networks.

Capacity optimization

Capacity optimization, where you only for the resources you utilize over time, is another technical benefit of the cloud. The core concept to consider is how elasticity and on-demand resources help you deploy, provision, or deprovision resources more dynamically.

Design security for a resiliency strategy

An organization can never have perfect security, but it can become resilient to security attacks. Like we are never perfectly immune to all health and safety risks in the physical world, the data and information systems we operate are also never 100 percent safe from all attacks all the time.

Resilience requires taking a pragmatic view that assumes a breach. It needs continuous investment across the full lifecycle of security risk.

Resilience goals

Security resiliency is focused on supporting the resiliency of your business.

- Enable your business to rapidly innovate and adapt to the ever changing business environment. Security should always be seeking safe ways to say yes to business innovation and technology adoption. Your organization can then adapt to unexpected changes in the business environment, like the sudden shift to working from home during COVID-19.

- Limit the impact and likelihood of disruptions before, during, and after active attacks to business operations.

Define a security strategy

Monitor and protect at cloud-scale

Security of the cloud and from the cloud

Securing software-defined datacenters

Cybersecurity resilience

Reducing risk for your security program should be aligned to your organizations mission and shaped by three key strategic directions:

- Building resilience into your cybersecurity strategy

- Strategically increasing attacker cost

- Tactically containing attacker access.

NIST Framework is an excellent guide for security designs.

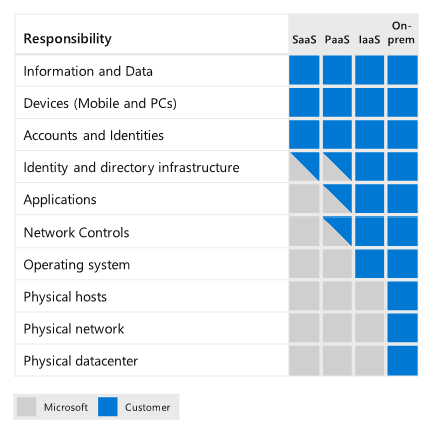

Adopting the shared responsibility model

Shifting to the cloud for security is more than a simple technology change, it’s a generational shift in technology akin to moving from mainframes to desktops and onto enterprise servers. Successfully navigating this change requires fundamental shifts in expectations and mindset by security teams. Adopting the right mindsets and expectations reduces conflict within your organization and increases the effectiveness of security teams.

Integrate a hybrid or multi-tenant environment into a security strategy

Defender for Cloud

Infrastructure comprises the hardware, software, micro-services, networking infrastructure, and facilities required to support IT services for an organization. Zero Trust infrastructure solutions assess, monitor, and prevent security threats to these services.

| Zero Trust goal | Defender for Cloud feature |

|---|---|

| Assess compliance | In Defender for Cloud, every subscription automatically has the Azure Security Benchmark security initiative assigned. Using the secure score tools and the regulatory compliance dashboard you can get a deep understanding of your customer’s security posture. |

| Harden configuration | Assigning security initiatives to subscriptions, and reviewing the secure score, leads you to the hardening recommendations built into Defender for Cloud. Defender for Cloud periodically analyzes the compliance status of resources to identify potential security misconfigurations and weaknesses. It then provides recommendations on how to remediate those issues. |

| Employ hardening mechanisms | As well as one-time fixes to security misconfigurations, Defender for Cloud offers tools to ensure continued hardening such as: Just-in-time (JIT) virtual machine (VM) access Adaptive network hardening Adaptive application controls. |

| Set up threat detection | Defender for Cloud offers an integrated cloud workload protection platform (CWPP), Microsoft Defender for Cloud. Microsoft Defender for Cloud provides advanced, intelligent, protection of Azure and hybrid resources and workloads. One of the Microsoft Defender plans, Microsoft Defender for servers, includes a native integration with Microsoft Defender for Endpoint. Learn more in Introduction to Microsoft Defender for Cloud. |

| Automatically block suspicious behavior | Many of the hardening recommendations in Defender for Cloud offer a deny option. This feature lets you prevent the creation of resources that don’t satisfy defined hardening criteria. Learn more in Prevent misconfigurations with Enforce/Deny recommendations. |

| Automatically flag suspicious behavior | Microsoft Defender for Cloud’s security alerts are triggered by advanced detections. Defender for Cloud prioritizes and lists the alerts, along with the information needed for you to quickly investigate the problem. Defender for Cloud also provides detailed steps to help you remediate attacks. For a full list of the available alerts, see Security alerts – a reference guide. |

Integrate Defender for Cloud with your SIEM, SOAR, and ITSM solutions

Microsoft Defender for Cloud can stream your security alerts into the most popular Security Information and Event Management (SIEM), Security Orchestration Automated Response (SOAR), and IT Service Management (ITSM) solutions.

Microsoft Sentinel

Defender for Cloud natively integrates with Microsoft Sentinel, Microsoft’s cloud-native, security information event management (SIEM) and security orchestration automated response (SOAR) solution.

Sentinel connectors – Microsoft Sentinel includes built-in connectors for Microsoft Defender for Cloud at the subscription and tenant levels:

- Stream alerts to Microsoft Sentinel at the subscription level

- Connect all subscriptions in your tenant to Microsoft Sentinel

Stream your audit logs – An alternative way to investigate Defender for Cloud alerts in Microsoft Sentinel is to stream your audit logs into Microsoft Sentinel:

- Connect Windows security events

- Collect data from Linux-based sources using Syslog

- Connect data from Azure Activity log

Stream alerts with Microsoft Graph Security API

Defender for Cloud has out-of-the-box integration with Microsoft Graph Security API. No configuration is required and there are no additional costs.

Integrate Defender for Cloud with an Endpoint Detection and Response (EDR) solution

Microsoft Defender for Endpoint

Microsoft Defender for Endpoint is a holistic, cloud-delivered endpoint security solution.

Defender for Cloud’s integrated CWPP for machines, Microsoft Defender for servers, includes an integrated license for Microsoft Defender for Endpoint. Together, they provide comprehensive endpoint detection and response (EDR) capabilities. For more information, see Protect your endpoints.

Azure Logic Apps

Use Azure Logic Apps to build automated scalable workflows, business processes, and enterprise orchestrations to integrate your apps and data across cloud services and on-premises systems.

Integrate Defender for Cloud with other cloud environments

To view the security posture of Amazon Web Services machines in Defender for Cloud, onboard AWS accounts into Defender for Cloud.

To view the security posture of Google Cloud Platform machines in Defender for Cloud, onboard GCP accounts into Defender for Cloud.

Develop a technical and governance strategy for traffic filtering and segmentation

It’s often the case that the workload and the supporting components of a cloud architecture will need to access external assets. These assets can be on-premises, devices outside the main virtual network, or other Azure resources. Those connections can be over the internet or networks within the organization.

Key points

- Protect non-publicly accessible services with network restrictions and IP firewall.

- Use Network Security Groups (NSGs) or Azure Firewall to protect and control traffic within the VNet.

- Use Service Endpoints or Private Link for accessing Azure PaaS services.

- Use Azure Firewall to protect against data exfiltration attacks.

- Restrict access to backend services to a minimal set of public IP addresses.

- Use Azure controls over third-party solutions for basic security needs. These controls are native to the platform and are easy to configure and scale.

- Define access policies based on the type of workload and control flow between the different application tiers.

Use NSG or consider using Azure Firewall to protect and control traffic within the VNET.

Azure best practices for network security

Use strong network controls

As you plan your network and the security of your network, we recommend that you centralize:

- Management of core network functions like ExpressRoute, virtual network and subnet provisioning, and IP addressing.

- Governance of network security elements, such as network virtual appliance functions like ExpressRoute, virtual network and subnet provisioning, and IP addressing.

Logically segment subnets

Azure virtual networks are similar to LANs on your on-premises network. The idea behind an Azure virtual network is that you create a network, based on a single private IP address space, on which you can place all your Azure virtual machines. The private IP address spaces available are in the Class A (10.0.0.0/8), Class B (172.16.0.0/12), and Class C (192.168.0.0/16) ranges.

Adopt a Zero Trust

Best practice: Give Conditional Access to resources based on device, identity, assurance, network location, and more.

Detail: Azure AD Conditional Access lets you apply the right access controls by implementing automated access control decisions based on the required conditions. For more information, see Manage access to Azure management with Conditional Access.

Best practice: Enable port access only after workflow approval.

Detail: You can use just-in-time VM access in Microsoft Defender for Cloud to lock down inbound traffic to your Azure VMs, reducing exposure to attacks while providing easy access to connect to VMs when needed.

Best practice: Grant temporary permissions to perform privileged tasks, which prevents malicious or unauthorized users from gaining access after the permissions have expired. Access is granted only when users need it.

Detail: Use just-in-time access in Azure AD Privileged Identity Management or in a third-party solution to grant permissions to perform privileged tasks.

Control routing behavior

When you put a virtual machine on an Azure virtual network, the VM can connect to any other VM on the same virtual network, even if the other VMs are on different subnets. This is possible because a collection of system routes enabled by default allows this type of communication. These default routes allow VMs on the same virtual network to initiate connections with each other, and with the internet (for outbound communications to the internet only).

Configure user-defined routes when you deploy a security appliance for a virtual network.

Deploy perimeter networks for security zones

You can use Azure or a third-party solution to provide an additional layer of security between your assets and the internet:

- Azure native controls. Azure Firewall and the web application firewall in Application Gateway offer basic security with a fully stateful firewall as a service, built-in high availability, unrestricted cloud scalability, FQDN filtering, support for OWASP core rule sets, and simple setup and configuration.

- Third-party offerings. Search the Azure Marketplace for next-generation firewall (NGFW) and other third-party offerings that provide familiar security tools and significantly enhanced levels of network security. Configuration might be more complex, but a third-party offering might allow you to use existing capabilities and skillsets.

Dedicated WAN links

- Site-to-site VPN. It’s a trusted, reliable, and established technology, but the connection takes place over the internet. Bandwidth is constrained to a maximum of about 1.25 Gbps. Site-to-site VPN is a desirable option in some scenarios.

- Azure ExpressRoute. We recommend that you use ExpressRoute for your cross-premises connectivity. ExpressRoute lets you extend your on-premises networks into the Microsoft cloud over a private connection facilitated by a connectivity provider. With ExpressRoute, you can establish connections to Microsoft cloud services like Azure, Microsoft 365, and Dynamics 365. ExpressRoute is a dedicated WAN link between your on-premises location or a Microsoft Exchange hosting provider. Because this is a telco connection, your data doesn’t travel over the internet, so it isn’t exposed to the potential risks of internet communications.

Disable RDP/SSH Access to virtual machines

Disable direct RDP and SSH access to your Azure virtual machines from the internet. After direct RDP and SSH access from the internet is disabled, you have other options that you can use to access these VMs for remote management.

Secure your critical Azure service resources to only your virtual networks

Use Azure Private Link to access Azure PaaS Services (for example, Azure Storage and SQL Database) over a private endpoint in your virtual network. Private Endpoints allow you to secure your critical Azure service resources to only your virtual networks. Traffic from your virtual network to the Azure service always remains on the Microsoft Azure backbone network. Exposing your virtual network to the public internet is no longer necessary to consume Azure PaaS Services.

Prevent dangling DNS entries and avoid subdomain takeover

Subdomain takeovers are a common, high-severity threat for organizations that regularly create, and delete many resources. A subdomain takeover can occur when you have a DNS record that points to a deprovisioned Azure resource. Such DNS records are also known as “dangling DNS” entries. CNAME records are especially vulnerable to this threat. Subdomain takeovers enable malicious actors to redirect traffic intended for an organization’s domain to a site performing malicious activity.

To identify DNS entries within your organization that might be dangling, use Microsoft’s GitHub-hosted PowerShell tools “Get-DanglingDnsRecords”.

This tool helps Azure customers list all domains with a CNAME associated to an existing Azure resource that was created on their subscriptions or tenants.

If your CNAMEs are in other DNS services and point to Azure resources, provide the CNAMEs in an input file to the tool.

Secure your networks

Routing all on-premises user requests through Azure Firewall

The user-defined route in the gateway subnet blocks all user requests other than those received from on-premises. The route passes allowed requests to the firewall, and these requests are passed on to the resources in the spoke virtual networks if they are allowed by the firewall rules. You can add other routes, but make sure they don’t inadvertently bypass the firewall or block administrative traffic intended for the management subnet.

Using NSGs to block/pass traffic to spoke virtual network subnets

Traffic to and from resource subnets in spoke virtual networks is restricted by using NSGs. If you have a requirement to expand the NSG rules to allow broader access to these resources, weigh these requirements against the security risks. Each new inbound pathway represents an opportunity for accidental or purposeful data leakage or application damage.

Use Azure Virtual Network Manager to create baseline Security Admin rules

AVNM allows you to create baselines of security rules, which can take priority over network security group rules. Security admin rules are evaluated before NSG rules and have the same nature of NSGs, with support for prioritization, service tags, and L3-L4 protocols. This will allow central IT to enforce a baseline of security rules, while allowing an independency of additional NSG rules by the spoke virtual network owners. To facilitate a controlled rollout of security rules changes, AVNM’s deployments feature allows you to safely release of these configurations’ breaking changes to the hub-and-spoke environments.

Things to remember

Different topics for securing your environments. There will be questions from all the topics, so learn them.

| Security Topic | Description |

|---|---|

| Security design principles | These principles describe a securely architected system hosted on cloud or on-premises datacenters, or a combination of both. |

| Governance, risk, and compliance | How is the organization’s security going to be monitored, audited, and reported? What types of risks does the organization face while trying to protect identifiable information, Intellectual Property (IP), financial information? Is there specific industry, government, or regulatory requirements that dictate or provide recommendations on criteria that your organization’s security controls must meet? |

| Regulatory compliance | Governments and other organizations frequently publish standards to help define good security practices (due diligence) so that organizations can avoid being negligent in security. |

| Administration | Administration is the practice of monitoring, maintaining, and operating Information Technology (IT) systems to meet service levels that the business requires. Administration introduces some of the highest impact security risks because performing these tasks requires privileged access to a broad set of these systems and applications. |

| Applications and services | Applications and the data associated with them ultimately act as the primary store of business value on a cloud platform. |

| Identity and access management | Identity provides the basis of a large percentage of security assurances. |

| Information protection and storage | Protecting data at rest is required to maintain confidentiality, integrity, and availability assurances across all workloads. |

| Network security and containment | Network security has been the traditional linchpin of enterprise security efforts. However, cloud computing has increased the requirement for network perimeters to be more porous and many attackers have mastered the art of attacks on identity system elements (which nearly always bypass network controls). |

| Security Operations | Security operations maintain and restores the security assurances of the system as live adversaries attack it. The tasks of security operations are described well by the NIST Cybersecurity Framework functions of Detect, Respond, and Recover. |

RSS - Posts

RSS - Posts